Complete Guide to DORA Metrics | What are DORA metrics?

August 22nd, 2022

Mean Time to Recovery (MTTR) explained

It's Friday afternoon, and you have mail. Apparently, a user received a 500 error when attempting to sign in. She contacted Customer Service. They didn't know what to do, so they forwarded the email to your engineering team. A close look at the email thread reveals that Customer Service received it... on Tuesday. And they sat on it until today.

Hopefully, it was just this one user. You open your browser, navigate to the web application, and attempt to sign in. You also get a 500 error. Perhaps it wasn't just this one user. At least it's only been happening since Tuesday; three days isn’t that bad.

You open up the server logs and dig around. Sadly, it looks like every sign-in attempt has been met by a 500 error. Every attempt... over the last 12 days. That's worse than you thought.

On the bright side, you quickly see what the issue is. You write a code fix and test it in less than an hour. Now, you just need to deploy it.

Oh, but Grant is the only team member who knows how to do production deployments with that new cloud provider you started using a few months ago. Grant is gone for the day, and he's on PTO all of next week. It's going to be 10 more days before he's back and able to deploy your fix.

Let’s do the math.

- 9 days of the issue going unnoticed (by your team)

- 3 days of communication lag between a user raising the issue and your team finding out about it

- 1 hour to write and test a fix

- 10 more days waiting to get the fix deployed to production

That's 22 days of users not able to sign in to your application. This kind of operational inefficiency is a business killer. And this is why mature DevOps teams track and improve Mean Time to Recovery (MTTR).

Example of total days of downtime due to an engineering error.

In previous posts in this series, we’ve covered three of the four metrics from DORA:

In this post, we’ll walk through the fourth metric: MTTR (Mean Time to Recovery or Mean Time to Restore). We’ll look into the causes and impacts of poor MTTR performance and then provide guidance on how to improve this metric in your teams. Ultimately, a strong MTTR increases trust from your end users and stakeholders.

What MTTR is and isn’t

Time To Recover can be defined as “the time taken to restore a service that impacts users.” Mean Time to Recovery defined, therefore, is the average of that response time, taken across all incidents in an organization.

Incident response, not prevention

MTTR focuses on responding to incidents, not preventing them. Incident prevention is tracked by a different metric altogether: Mean Time Between Failures (MTBF). While MTBF is not one of DORA’s key metrics, it still provides value. If a team sees MTBF tick downwards—meaning failures are occurring more frequently—this is a sign that the system isn’t working well, and it’s time to find out why.

Organizations that attempt to prevent issues and bring the MTBF metric down might find themselves blowing up their MTTR score. If you prevent change because you’re concerned about causing incidents, then it’s hard to improve services operationally. Before long, you may encounter a perfect storm of deferred maintenance issues that will take down your system.

MTTR performance benchmarks

The original DORA research asked this question:

For the primary application of service you work on, how long does it generally take to restore service when a service incident or a defect that impacts users occurs (such as an unplanned outage or service impairment)?

Respondents could choose from the following options:

- Less than one hour

- Less than one day

- Less than one week

- Between one week and one month

- One to six months

- More than six months

The research from DORA shows that:

- Elite performers are back up and running in less than one hour.

- High and Medium performers are fixed up in less than one day.

- Low performers restore service between a week and a month.

Length of time it takes teams of various levels to recover from an unplanned outage.

The difference between Elite and High performance is stark. Meanwhile, breaking a service for more than seven days points to significant dysfunction in an organization.

The causes of poor MTTR

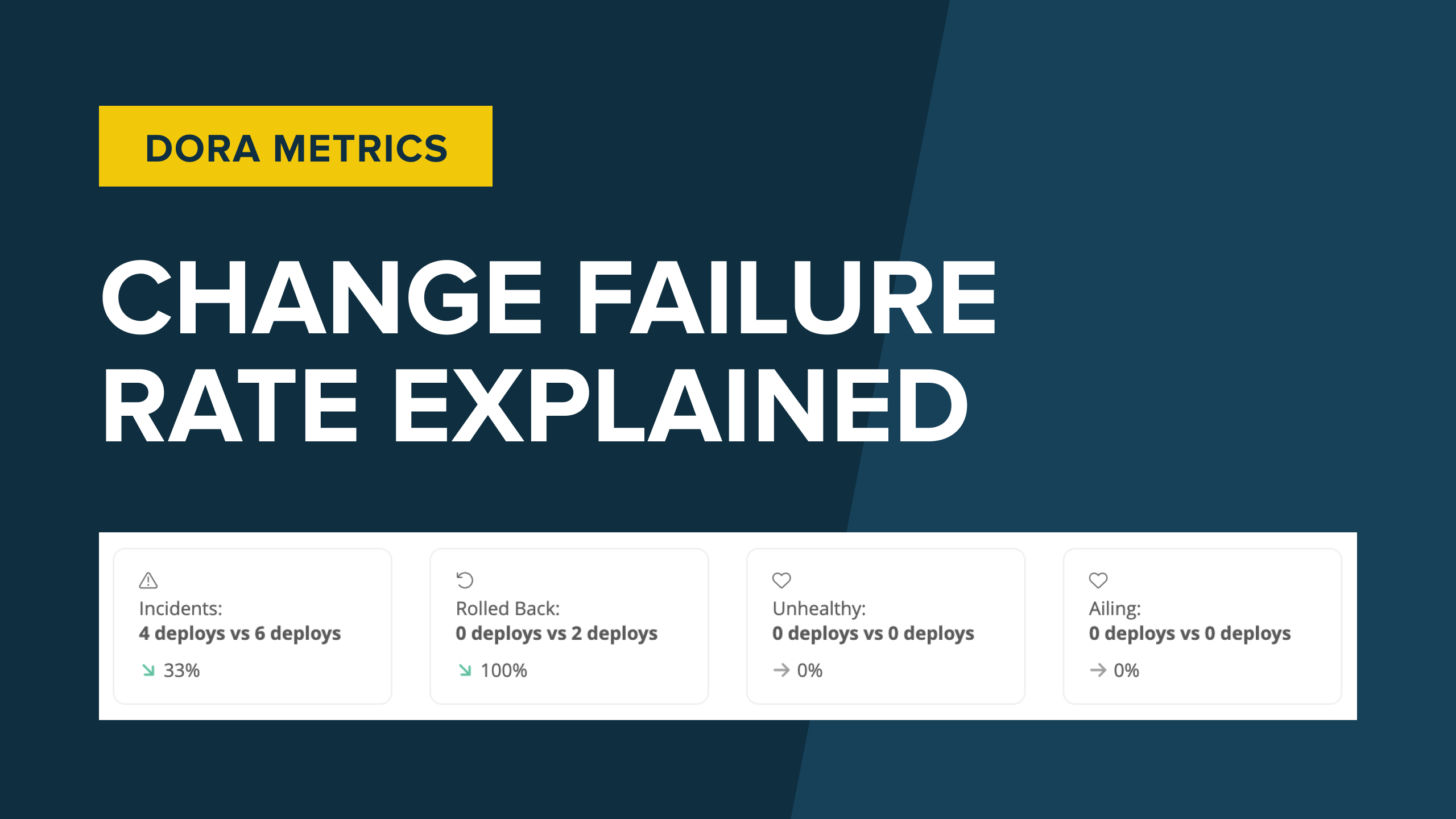

The dysfunction surfaced from a poor Mean Time to Recovery is not low-quality code. You may have robust and well-tested code that hardly ever breaks. If so, then your Change Failure Rate metric might be quite strong. However, when your application does break—and your team doesn’t have good processes to quickly detect an issue, create a fix, and ship it out—then your MTTR will make this weakness abundantly clear.

What might be some of the causes of poor MTTR?

Poor tooling for problem discovery

Keep in mind that the time measured for determining MTTR begins as soon as your system becomes unavailable, not when you’ve discovered that your system is unavailable. Slow problem discovery will result in slow recovery.

Mean Time to Detection (MTTD), also known as Mean Time to Discovery, tracks how long it takes to detect that your system has a problem. MTTD contributes to MTTR.

Problem discovery is challenging for organizations that don’t have proper tooling in place. Without uptime monitoring, helpdesk tools, periodic testing, or alerting, a DevOps team may have little way of knowing—apart from a happenstance website check or an email from an exasperated end user—when its application is down.

No clear plan for incident management

Once an issue has been detected and acknowledged, who’s in charge and what steps need to be taken? When an incident occurs, a DevOps team will start feeling the combined stress of a system outage, frustrated end users, and disappointed business stakeholders. If that team doesn’t already have a plan in place for how to handle an incident, then the time spent coming up with a plan will count against their MTTR.

Cumbersome and slow deployment processes

Imagine your DevOps team quickly detects an incident and writes a fix for it. Your team is on its way to quick recovery and elite-level MTTR. However, Bob is the sole engineer who knows how to perform deployments (manually), and he just went on his two-week honeymoon. Either MTTR will suffer, or Bob’s marriage will suffer. Your choice.

We talked about automated deployments as part of the Deployment Frequency metric. A manual deployment process filled with steps that require human intervention will negatively impact MTTR just as much as it will Deployment Frequency.

How to improve MTTR

There are many ways to improve Mean Time to Recovery. Although many downtime issues manifest at runtime, making it easy to blame the systems team who might deploy these applications, many MTTR improvements can start with developers.

Make code and error messages readable

For a strong MTTR metric, a team needs speed to determine the issue in order to implement a fix. Sometimes, the only information available for investigating an issue is a stack trace. If that’s the case, then a team might be able to look at the line numbers from the stack trace to figure out what is going on. Good class and method names help significantly here.

If the code is written to log errors, then those error messages should be free of spelling mistakes and work to provide helpful context. When writing code, ask yourself, “If future-me is reading the error message being logged here, what would be the most helpful context information to provide to future-me?”

Put monitoring in place today and enhance it tomorrow

It’s terrible when your users discover errors. It’s worse when they aren’t believed. A basic monitoring page that proves the critical subsystems are available could usually be made in an afternoon. It shouldn’t expose detail apart from a simple “OK” or “Not OK” message. Dozens of monitoring systems are available to retrieve those pages and alert when they report “Not OK”. It’s then possible to add more nuanced testing, but start with the basics.

Sleuth dashboard showing Mean Time to Recovery

Review logs

Plenty of systems are available to aggregate log messages, and many even use machine learning to tell you that something has changed. If developers eat the dog food of their logs, they’ll see how error messages can confuse an operations team.

Have a process for managing incidents

Incidents are work that should be managed, often in stressful circumstances. It should be clear in the heat of the moment who is running an incident, who is dealing with stakeholders, and who is responsible for the diagnosis and remedy of the service.

Plan for failure

Sometimes, you need to take a step back from the natural optimism of delivering services (“This feature is going to bring in $50K ARR! Let’s get it done!”) to some healthy pessimism (“If the database stops performing under load, how will we make the front end return something intelligible to the users?”). This takes planning. By planning for potential failures, you reduce your time to detect and reduce the lag time it takes to write and ship fixes.

The dos and don’ts of MTTR

Just like with any of our other DORA metrics, a team can game the system to make its MTTR metric look good, but this will reveal itself as other metrics subsequently slip. Let’s consider some of the dos and don'ts of MTTR.

Do: Monitor system uptime/downtime

If your application has a monitoring URL and an external monitoring service like Uptime Robot that can prove the application isn’t available, then you can be quickly notified as soon as your application begins to experience an outage. Reducing your MTTD will help reduce your MTTR. In addition, uptime monitoring will help with tracking and measuring your MTTR.

It’s also wise to send that incident data to tools that can help you track and manage it. For example, integrations can be built to open a JIRA ticket when an incident occurs. When monitoring is connected to alerting, you can begin automating the initial steps of your incident management plan.

Don’t: Use shortsighted duct-tape solutions just to reduce MTTR

If all you care about is MTTR, then you might be tempted to cobble together a quick fix just to get the system back online. Sure, a duct-tape hack might get your MTTR down to one hour, but the buggy solution just means the application goes down again several hours later. Your MTTR may be strong, but your MTBF will plummet, and your Change Failure Rate will skyrocket. It’s not worth it.

Do: Improve your Deployment Frequency

If your team only deploys changes once a month, what will you do if an outage occurs just a few days after the last deployment, and you need to ship a fix? You certainly can’t wait until next month to deploy the patch. However, if you only deploy once a month, your team probably doesn’t have a lot of practice (or the culture) to perform a quick deployment confidently. It will take you longer to deploy the fix, and your MTTR will suffer.

However, if your team has a high Deployment Frequency—for example, daily or (gasp!) hourly—then adding a code patch to the next hour’s deployment will be no big deal. A strong Deployment Frequency metric contributes to a strong MTTR.

Do: Take advantage of feature flags

A feature flag is a toggle that lets you turn a feature in production on or off. For example, should users be able to access the new product rating UI? If the feature flag is toggled on, then yes. If it’s off, then users will see the old UI.

How does this relate to MTTR? Let’s say a new feature or code change is about to be deployed. Is there a possibility that this change might result in a broken application? If so, put it behind a feature flag. As soon as your team detects an incident, toggle the feature flag off. Just like that, the application is up and running again. Your MTTR is strong, your end users are not frustrated, and your team has the stress-free margin to investigate the issue.

Conclusion

MTTR is a vital metric for tracking how your team is responding to failures. We’ve covered what MTTR is, how to improve your MTTR metrics, and where to focus your efforts.

If someone uses a service that you have worked on, then they are giving you a certain amount of trust. They’re using that service for their job or entertainment or some purpose even more serious. Every time they use your service, you are building trust.

Service failures erode that trust. Therefore, minimizing the impact of failures by restoring services quickly is the key. You can’t stop failure, but you can get better at responding to it. Being skilled in building systems that come back after failure is great for everyone: you, your team, and your end users.